What Colour is the Moon? Part Two

Associate Professor Camera Department, Russian State University of Cinematography (VGIK)

What Colour is the Moon? Part One on Previous Page

In this second part of the experiment discussed in Part One, I explain why the lunar material in the Surveyor pictures was turned completely grey. This is my specialist subject, for several years at the University of Cinematography I have been teaching the discipline of Chromatics and the issue of colour distortion.

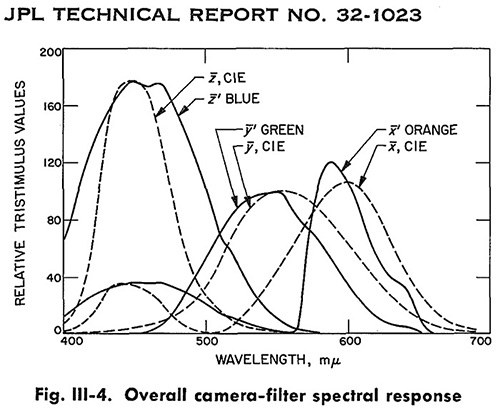

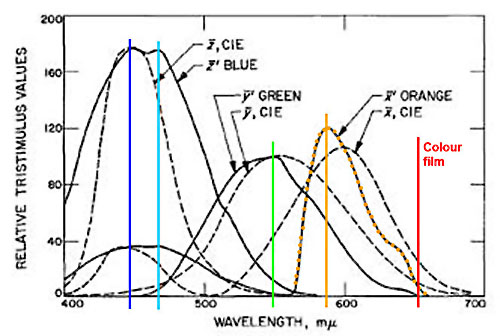

Firstly, we need to deal with some technical details. The Surveyor 1 official technical report from NASA 1 states that the transmission curves of the three filters were close to the standard filters:

For ease of analysis I have identified this curve with an orange vertical line to show which wavelength corresponds to the maximum transmittance of the orange filter.

The maximum corresponds to about 580 nm. What colour is that? Before answering this question, let’s take a look at a beautiful photograph of a city at night (downloaded from the Internet). A park is illuminated with yellow sodium-vapour lamps:

What is the light emission maximum of a sodium lamp? A regular low-pressure sodium lamp has only one emission peak – 589 nm, and it gives a monochromatic yellow. A little mercury is added to the lamps, which causes additional small peaks to appear in the emission spectrum.

Measurements are made by a spectroradiometer specbos 1201.

So, a sodium lamp gives maximum emission at wavelength of about 590 nm. The filter installed on the Surveyor had a maximum transmittance at about 580 nm, therefore its colour is more yellow than sodium lamps. Instead of shooting coloured objects using the classical model of red, green and blue filters (denoted as RGB), another triad was used – blue, green and yellow filters.

Using a set of optical glasses we’ll try to find an yellow-orange filter, which has the same steep front, as in the above diagram of Surveyor filters.

Orange glasses OC-13 and OC-14 meet these requirements. But all of the orange glasses pass red rays well. Moreover, the transmittance of orange glasses continues to infrared up to 2500 nm. But the orange Surveyor filter did not pass any red rays (after 640-650 nm) at all. Take any orange filter and look through it at a red object – it will be clearly visible. Red rays must pass through orange filters. So, to pick the filter as close as possible to the Surveyor filter, we need to add another filter to the yellow-orange filter that will withhold (will not pass) red rays.

Red rays are blocked by blue/blue-green glasses. The glasses C3C-25 and C3C-23 have a similar decaying curve in the red zone. Thus, to obtain an accurate spectral transmission characteristic of a filter as close as possible to the "orange" Surveyor filter, I have to add another blue glass to the orange one.

What is the resulting colour? Less orange, more yellow! as below:

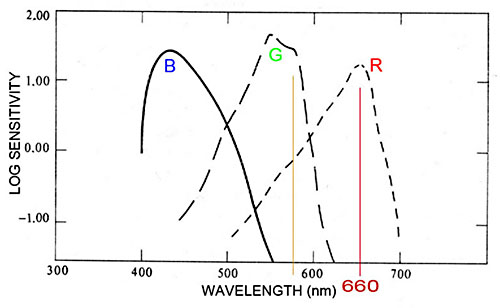

It’s interesting to see where in the red zone modern professional photographic materials have maximum sensitivity. Let’s take negative motion picture Fuji film.

The maximum is in the red zone at around 645 nm. The maximum is not located in the yellow spectrum region, but in the middle of the red area!

Now let’s take Kodak colour reversal film Ektachrome 100. The maximum is also in the red zone of about 650 nm!

According to the stated data, the Apollo mission photography was undertaken with colour reversal (transparency) film Ektachrome with a sensitivity of 64 ASA. The maximum sensitivity of the red layer is at the wavelength of 660 nm.

Spectral sensitivity of Kodak professional Ektachrome 64 film

The blue filter for the Surveyor camera also raises questions. In addition to a peak in the blue zone, it has a second transmission maximum, closer to the blue rays.

So, what do we see as a result? Instead of taking pictures in the standard system through the red, green and blue filters, the photography was done through blue, green and yellow.

Here is the classic triad of filters for colour separation (RGB).

But this is how an orange Surveyor filter (used instead of a red filter) would have looked:

So what kind of colour precision are we talking about in this case?

All red objects have a maximum reflectance in the red zone, and our "orange" Surveyor filter does not pass red light. All red objects will become very dark, unsaturated, and almost grey.

The real reason for the colour desaturation is the use of the wrong colour separation.

As we can see, the partial loss of colour, especially noticeable in the lunar soil (which became completely grey), was caused by an incorrect selection of a triad of filters for the colour separation. Instead of shooting with red, green and blue filters – blue, green and yellow filters were used.

And so in 1966, when the first colour photographs were received back from Surveyor, in which the soil was completely grey, the decision was no doubt made that the simulated lunar surface images would be of a black and white Moon. And the fake regolith was rendered grey.

Luna 16 would return the first 105 grams of soil from the surface of the Moon only in September 1970 and it would be dark brown.

By the way, once Apollo skeptics had implicated NASA in any discrepancy in the pictures and documents, NASA reluctantly responded: making corrections, adding phrases to the record, touching up some elements and erasing others, and of course also finding "lost" lunar soil, corresponding to the modern concept of the Moon.

Here is the brown soil that was found:

Strangely, some lunar soil samples collected during the Apollo 11 mission, were recently discovered in the archives of the Berkeley National Laboratory, and most significantly, no one knows how they got there. 2

Why use such unusual filters – blue, green, and orange?

Why was the Surveyor photography using this strange triad of filters? Why weren’t RGB filters used? And why did NASA replace a red filter with a yellow-orange one?

What happens to the brown colour when a red photographic filter is replaced with an orange filter?

After the soft lunar landing in June 1966, the Surveyor took more than 11,000 pictures with a black and white camera. Most of these pictures have served (like puzzle pieces) to complete the surrounding lunar landscape. But a portion of these pictures was taken through these filters, in order to later synthesize full-colour pictures from the three colour components.

But, in my opinion, the separation study was not correctly conducted. We know that the Apollo astronauts used Ektachrome film and Hasselblad cameras. What would be the difference in the colour of the lunar regolith, taken with reversal film, compared with an image taken using the synthesis of three colour-separated black and white images by the Surveyor craft?

The three light-sensitive layers of Ektachrome film and the Surveyor camera would see the lunar soil through three colour filters in different parts of the spectrum. We know the spectral reflectance characteristics of the regolith from the Sea of Tranquillity, where Apollo 11 allegedly landed. See the figure above.

We also know the spectral sensitivity of the three layers of Ektachrome 64 film. Since the vertical scale of the graph of the spectral sensitivity is logarithmic, the area of the maximum sensitivity is measured where sensitivity is halved. One log unit means a change in sensitivity by 10 times, change of a factor of 2 is 0.3 in a vertical logarithm scale. We select zones of maximum sensitivity for each of the three film layers (from the maximum by 0.3 units down left and right). These are sectors 410-450 nm, 540-480 nm and 640-660 nm.

Ektachrome film will perceive the lunar soil as if it reflected 7.1% in the blue zone, 9.1% in the green zone and 10.3% in the red zone. This is how separations work at the exposure stage. Sometimes this stage is called ANALYSIS. And then, after developing the film, each layer accumulates dye in proportion to the received exposure. A full colour image is formed out of three separate colours. This stage is called SYNTHESIS.

In a reversal photographic film image analysis and synthesis occurs inside the emulsion layers of the film. In the case of Surveyor craft ANALYSIS of the lunar images (decomposition into three black-and-white image components) occurred on the Moon itself, and image SYNTHESIS took place on Earth, after receiving the transmitted signal from the lunar surface.

In front of the Surveyor TV camera lens there is a filter assembly – the camera took consecutive images – first through one filter, then through a second, and then through a third.

Since the transmission zones of the Surveyor filters do not match the film zones of sensitivity, the Surveyor TV camera saw the lunar soil differently, in other zones of the spectrum: 430-470nm, 520-570 nm and 570-605nm. After this photography there would be be a sense that the lunar soil reflected 7.5% of the light in the blue zone, 8.7% in the green zone and 9.2% in the red zone.

As further results were presented in digital form – as a picture in .jpg format, we need to understand how objects with certain reflection coefficients look in a digital image.

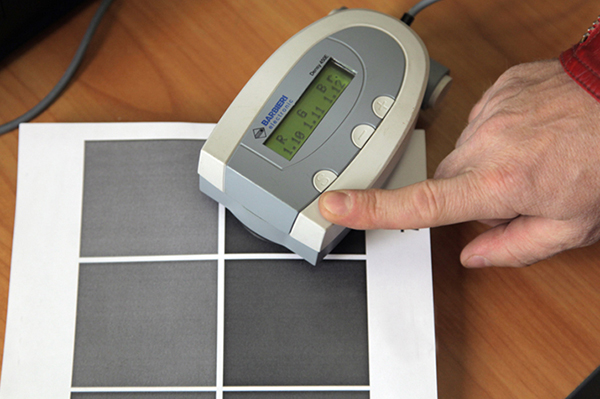

For this test I made 8 grey fields, which were printed out on a monochrome laser printer on a sheet of A4 paper. And with a densitometer I identified their actual reflection coefficients.

So if the densitometer indicates a value near one, it means that the field reduces the amount of reflected light 10 times. The densitometer shows results in logarithmic units. One logarithmic unit means 10 times less light. Thus, we have a field of 10% reflectance in three zones. The densitometer takes measurements in three areas of the spectrum – red, green and blue. Next to the letters R, G, B there is a small letter 'r' (reflection) – measurement is performed in the reflected light.

The darkest field in the test scale had a reflectivity density of 1.11 that in terms of the reflection coefficient is 7.7%

The reflection coefficient of one of the fields was close to 18% - 17.8%.

As we know, this grey area in the calibrated image with 8 bit colour depth has to have a brightness of 116-118 s-RGB. In a graphics editor I can make the picture a little brighter or darker, but if I am talking about accurate image appearance, the grey field with a reflectance of 18% has to have vales defined above. (The black T-shirt reflects 2.5% of the light.)

And only now can we say how objects with a particular reflectance will look in an 8-bit photographic image.

Reflectivity – Units of luminance

| 11.2% 10.0% 08.7% 07.7% |

92 82 70 60 |

I particularly want to emphasize the importance of this relationship, because I have seen articles where authors believe that the reflection coefficient of the lunar regolith is close to black earth, and therefore the "moon" Apollo surface images should look very dark. The authors show pictures "corrected" in accordance with their ideas in which regolith becomes quite black. This is a wrong approach.

Black earth reflects about 2-3 % of the light, and regolith is a little lighter 8-10%. In the sunlight illumination and with the correct exposure it should have luminance from 60 to 80 in the digitised 8-bit images. Now that we know how objects with various reflectivities are displayed in a digitised picture, we’ll try to simulate the colour of the lunar soil in a graphics editor; i.e. how a colour reversal film will see it, and how it was seen by the Surveyor TV camera.

We will translate the obtained above ZONE reflection coefficients of the lunar soil into digital luminance values. The Surveyor TV camera, through colour filters, displayed the lunar soil as an object that had reflection coefficients of 7.5% in the blue zone, 8.7% in green and 9.2% in the red. Because we have a table of correspondences between the reflectance of an object and its digital brightness in an image, we interpolate the received reflectivity into values convenient for the graphics editor. For accurate interpolation we can use the auxiliary recalculation chart.

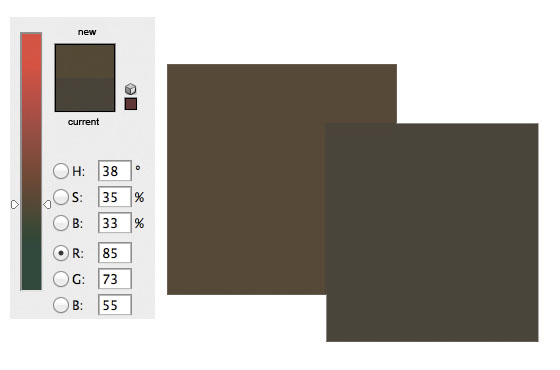

7.5% reflectivity corresponds to 58 units of luminance in an 8-bit digital image, 8.7% is 69 units, and 9.2% is 74. For the Ektachrome film we get zonal values of the reflection coefficient of the lunar soil as 7.1% in the blue zone, 9.1% in the green and 10.3% in the red one. This corresponds to the digital brightness values: B=55, G=73 and R=85.

The upper square – lunar regolith seen on film; lower square —

lunar regolith seen by the Surveyor camera.

The two squares show how much the colour of the lunar surface has changed, when instead of a colour reversal film, we have to shoot the regolith using the Surveyor technique. So we see that the replacement of the red filter by a yellow-orange filter led to the subject (regolith) losing its saturation and becoming almost grey.

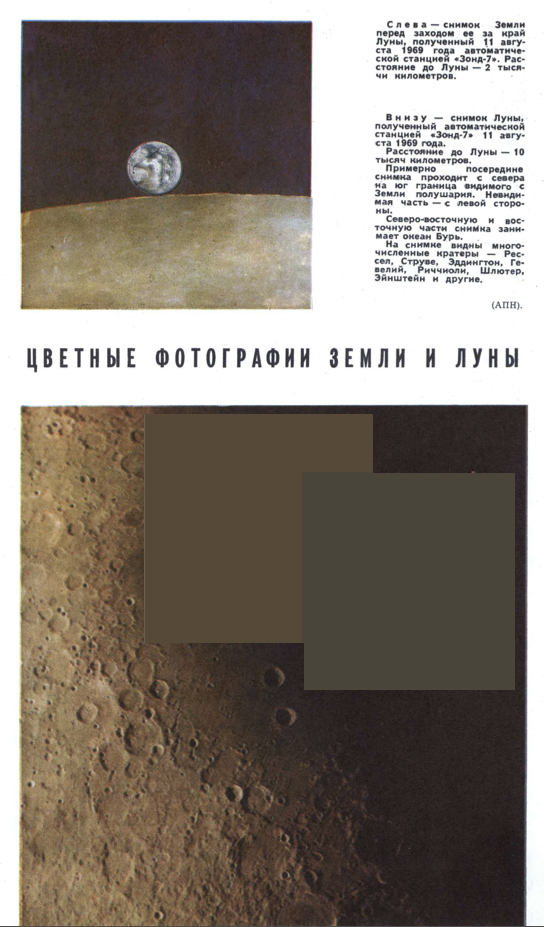

In August 1969 the Soviet Zond 7 accomplished the lunar flyby and returned to Earth colour film photographs of the Moon. I took a scanned page from the Soviet magazine Science and Life (Issue 11, 1969) where in the colour inset there are pictures of the lunar surface (bottom picture from a distance of 10,000 km), and overlaid two squares that show the results of the theoretical calculation of the regolith colour for colour reversal film, and also for shooting the regolith by the Surveyor method of colour separation.

What did surveyor actually send back? And what did those colour pictures look like? The Surveyor craft made a soft lunar landing June 1966 and then took about 11,000 photos of the lunar surface. The lander weighed about 300 kg.

Surveyor on Earth.

Up to this point the US Pioneer 1, Pioneer 3 and Pioneer 4 craft could not achieve a lunar landing. Rangers 1, 2 and 3 didn’t reach the Moon either, and Ranger 4, Ranger 5 and Ranger 6 crashed into the Moon.

And then finally Surveyor sent back pictures to Earth taken with a black and white TV camera through colour filters. For proper colour tuning in the frame there was a calibration scale attached to the foot of the craft, which contained a circle of grey colours and a few coloured sectors.

An example of such separated monochrome images can be found in the Surveyor I Mission Report No. 32-1023, September 1966.

Image taken through the orange filter.

Second image through the green filter.

Third image through the blue Filter.

And then by applying a conventional technique (for example, as it is done in printing), each image is painted over in a different colour – respectively cyan, magenta and yellow. This is the standard triad of colours for subtractive synthesis. The degree of luminance of images is selected so that the density of the grey fields is the same in all three frames.

We have tried to show here all three images, but because the quality of reproduction in the brochure is poor (three very contrasty pictures with deep shadows and also slightly different in scale), the result below is not particularly good:

And here is how the similar picture looks on the NASA web site (as photographed by Surveyor 3):

Apparently, this is one of the first large scale colour images of the lunar soil. The regolith seems almost grey.

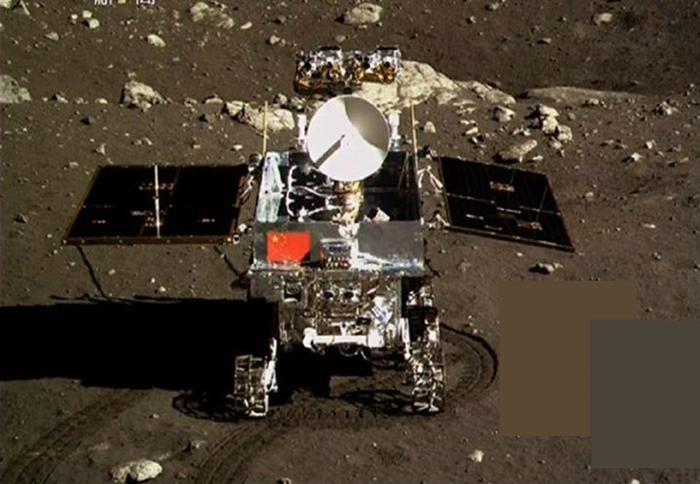

The first pictures taken by China's Chang'e-3 Moon probe present the lunar surface in a bright brown colour. In my opinion its saturation is too high.

And then the colour of the lunar surface at the landing site was corrected. At the time of writing, the soil under the Chinese Jade Rabbit Moon rover looks like this:

But it is possible that a more correct colour rendering should be like this:

So perhaps now we have a better idea of the colour of the Moon.

Leonid Konovalov

Aulis Online, January 2014

English translation from the Russian by BigPhil

Footnotes

1. L. D. Jaffe, E. M. Shoemaker, S. E. Dwornik et al. NASA JPL Technical Report No. 32-1023. Surveyor I Mission Report, Part II. Scientific Data and Results. Jet Propulsion Laboratory, California Institute of Technology, Pasadena, California, September 10, 1966.

2. Science Space Article (in Russian)

|

Leonid Konovalov graduated with honours from the Camera Department of VGIK in 1987. Currently he is an Associate Professor of the Camera Department of the Russian State University of Cinematography. He was a camera operator or an additional camera operator on many films and series. He was camera operator on the movie The Belovs which received the State Award in 1994.

Leonid Konovalov engineered the non-standard photographic films RETRO and DS-50 at the Shostka Chemical Plant "Svema" which were used in the production of 14 movies. In the magazine "Cinema and Television Technology" (in Russian) Leonid has published seven articles in scientific and technical topics. He also has written the book How to Make Sense of Films.

AULIS Online – Different Thinking